I happen to be one of those older software developers who saw the rise of XML. I even remember the older SGML standard, although I never used SGML. Version 1.0 of XML became an official standard in 1998. Once it became a standard, many companies started working to create the Killer App to work with XML without much of a hassle. And although at first many companies started to create their own XML parsers, not all of them were completely conform the standard. Those parsers disappeared fast enough too.

Right now, version 1.1 of XML is the latest standard. Yes, in 16 years not much has happened to this standard. And the changes that have been applied are more about supporting EBCDIC platforms and the newer Unicode definitions. There are discussions about a version 2.0 but it’s not likely to become a standard soon. Strange as it might sound, XML seems to be in decline if you look at how it’s used.

The power of XML was, of course, in the way how you defined these files and how you could do transformations on these file types. While we used DTD definition files at first to define the structure of an XML file, some smart people came up with the XSD schema format, which allowed more flexibility and is by itself an XML file. Combined with some nice, graphical tools, the XSD made it easier to define an XML file and to validate if an XML file conforms to the proper structure. And I’ve made plenty of XSD files between 2000 and 2010 since my work required a lot of XML data exchanges.

Of course, transformations are also important and here we use stylesheets. An XSLT file would be made in XML itself and define how you would convert an XML file to some other output format. In general, this output would be another XML file, an HTML document to display it in a web browser, a simple text file or even a comma-separated file. And in some special cases it could even create a complete rich text document that you could open in Word. This meant that you could e.g. send an XML file to a server and the server would then process it. It would validate the file with a schema and could do additional validation tools by using a style sheet. If it passed these validation style sheets, other stylesheets could then be used to extract data from the XML and send it to other servers for further processing, while it could also generate documentation to return to the user. You could do a lot of processing with just XML files.

Of course, XML also became popular because more developers started to create web services. And they used the SOAP protocol for this, which is a slightly complex protocol that’s heavily dependant on XML standards. Since SOAP also had some build-in version mechanism, you could always make sure if the client was still using the right SOAP definitions or not. You could even use several SOAP message formats on the same system with only the version number as difference. It wasn’t easy to set up, but it worked extremely well.

And more has been developed to support XML even more. The XPath expressions would allow you to point to specific elements within an XML document. With XQuery, you could execute queries on XML files and process the result. With namespaces you could even combine multiple XML definitions that uses similar entities. And then we have things like XLink, XPointer and XForms, which never have been very popular.

Between 2000 and 2010, it seemed that XML would be a dominating development technique. No more writing code in other programming languages that needed to be compiled, simply because XML happens to be a fast scripting environment. Many platforms started to have a standard for objects that could process XML files and knowledge of XML became a hard-needed requirement for developers. So, what changed?

Well, many developers consider the XML format a bit bulky, especially because tags are often used twice. Once to open the element and once to close it. Thus, if an element is called ‘NumberOfElements‘ then you have to write <NumberOfElements>10</NumberOfElements> and that’s a lot of text to store the number 10. As a result, some developers would then shorten those tag names so the resulting XML would be smaller. If you have 10,000 of these tags in your XML file, shortening it to TOE would save 26 characters per element, thus 260,000 characters in total. This doesn’t seem much but developers feel they gain more by these kinds of optimizations. With modern multi-core processors and systems with 8 or more GB of RAM, such optimizations might make the code half a second faster, which you barely notice with web services, but still… Developers think it saves a lot. And yes, when resources are truly limited, it makes a lot of sense but modern mentalities are that companies will just add a second server if one is too slow. Or more, if need be. This is because the costs of the more hardware is less expensive than the costs of having developers optimize the code even further.

These kinds of optimizations make XML files less human-readable while the purpose was to make this kind of data more readable. It becomes slightly worse when the XML file uses namespaces, since those namespaces are also shortened to just a few letters.

Another problem is the need to parse XML to extract the data. More and more companies are creating web applications that run within web browsers and heavily rely on JavaScript. These apps need to be able to run on multiple devices too. Unfortunately, not all browsers support parsing XML files and even those who do are a bit complex to use. With regular expressions it’s still possible to extract some data from the XML but if you need to fill a grid with 50 rows and 20 columns, things become real complex. And to solve this, developers started to send data to web applications as JavaScript instead of XML. This could then be executed and thus the data would load itself into memory. Since JavaScript objects are less bulky than the begin/end tags of XML elements, it made this new format very practical and thus JSON was born.

The birth of JSON also demanded a change in web services. Since web applications would call these services directly, it would be very clumsy if they have to set up SOAP messages and then parse the SOAP results. A newer, simpler style of web services arose, which uses the REST protocol. Of course, there are many other web service protocols but REST seems to become the new standard. Especially because it’s a simpler protocol that relies on the HTTP(s) protocol.

Of course, web applications have become more important these days because we’re getting more and more devices with all kinds of different operating systems, which all have web browsers. And, as I said, not all of those devices have a native XML parser built-in. They do support JavaScript though, and as a result it becomes quite easy to develop web applications for all devices which use data in JSON formats.

Of course, many devices also allow special platform-dependant apps that can be created with development tools for their specific platforms. For OS X and iOS-based devices you would use Objective C while you would use C++ or Java for Android devices. (Java is the preferred development platform for Android.) For Windows RT you would use .NET for Metro-style applications with either VB or C# as primary language. This makes it a bit difficult to develop software that runs on all three devices but there are several parties who have created compilers that will compile platformdependent executables from platform-independent code. Unfortunately, working with XML parsers still differs on all these platforms and those third-party compilers need to wrap their parsers around the built-in parsers of the underlying platform. That makes them a bit slow.

Since the number of operating systems have risen since the market starts getting more and more new devices, it becomes more difficult to keep a single standard that’s supported by all those systems. And the XML standard is quite complex so the different parsers might not all support the same things. In that regard, JSON is much simpler since these are just simple assignment statements. And these assignment statements are based on the Java syntax, which also happens to be similar to the C++, C# and Objective C syntax. The only difference with these languages is the fact that JSON puts the field names between quotes too, which you can’t do inside these languages.

So, XML is becoming less useful because it requires too much work to use. JSON makes data serialization simpler and is less bulky. Especially when developers are more focussing on web applications and apps for specific devices, the use of XML is in decline in favor of JSON and other solutions. But there’s one more reason why XML is in decline. And this is something within the .NET framework that’s called LINQ.

LINQ was implemented as a separate library for .NET version 3.5 but has become popular since then. Basically, LINK allows you to support data in a structured object and use simple queries to, or to execute transformations on extract data from those objects. This would be similar to XPath and XSLT but now it’s part of your development language, allowing you more choice in functions that you can apply to the data. This is especially important for date fields, since XML doesn’t work well with date formats. LINQ actually makes extracting data from object trees quite easy and can be used on an XML document if you’ve read this document in memory in a proper XDocument or XmlDocument object. Thus, the need for XSLT to transform data has disappeared since you can do the same in C#, VB, F# or Oxygene.

The result is that .NET developers don’t have to learn about XML anymore. Their .NET knowledge combined with LINQ is more than enough. Since .NET also allows serialization to and from XML formats, it’s also quite easy to read and write XML files in .NET. You can import an existing XSD file into your .NET application and have it converted to code, but since most XML data starts as objects that need to be stored in XML before serialization, you will often see that developers just define the objects and include attributes to tell if the object and its fields are elements or attributes, and have the serialization library use these object definitions to serialize it to and from XML. Thus, knowledge of XML schemas is not a requirement anymore.

Because .NET development made the dependency on XML knowledge almost obsolete, the popularity of XML is in decline. It’s still used quite often, but the knowledge that you need to do practical things with XML with XML tools is disappearing. And similar things are happening on other platforms. Java and PHP also started supporting LINQ queries. And, as a result, those environments can work on structured objects instead of XML data. Thus, XML is only needed if the data needs to be sent to some other process and even then, other formats might be chosen too.

In fact, many developers are less concerned about the data format that’s used for inter-process communication. The system is handling this for them and they just use a specific serialization library that does the bulk of the work for them. XML isn’t really declining, but less developers need knowledge about the XML format since development tools have nice wrappers around them that allow these developers to use XML without even realizing they’re using XML. It’s not XML that’s in decline. It’s the knowledge about XML that is in decline…

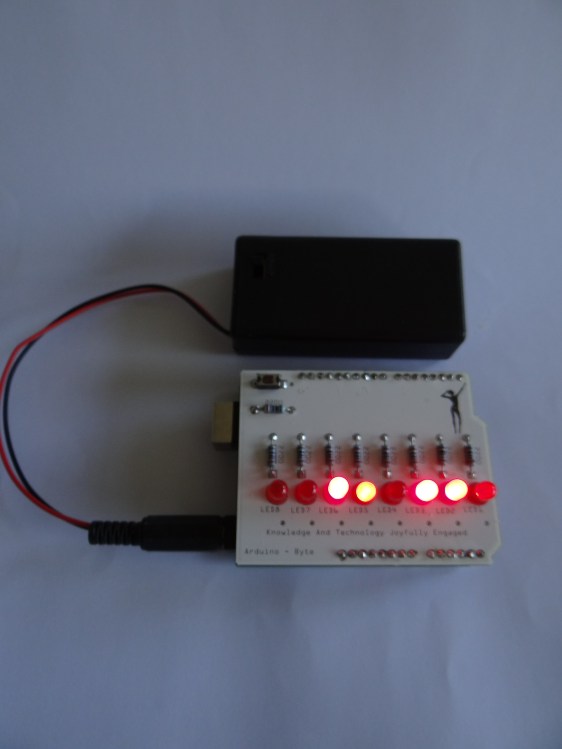

And yes, it could be possible to use this monitor from an Arduino board but the amount of programming you’d need would be huge, and the amount of memory the Arduino has would not allow you to do something complicated. So, if you want to create a photo frame with a special remote, the Raspberry Pi would be more practical. If you want to set the lights on your model train station based on the amount of light outside, the Arduino would be better.

And yes, it could be possible to use this monitor from an Arduino board but the amount of programming you’d need would be huge, and the amount of memory the Arduino has would not allow you to do something complicated. So, if you want to create a photo frame with a special remote, the Raspberry Pi would be more practical. If you want to set the lights on your model train station based on the amount of light outside, the Arduino would be better.